Video version of this essay:

Study Finds Common Solution to Non- Falsifiability: Discourage the study of things which have not been ‘proven’.

or, Study Observes Reason For Compartmentalized Conspiracies

Re-posted on Steemit – December 2019 Followup Essay

A reaction to: On the Viability of Conspiratorial Beliefs, by David Robert Grimes in PLOS, January 26, 2016 http://dx.doi.org/10.1371/journal.pone.0147905

Simulations of these claims predict that intrinsic failure would be imminent even with the most generous estimates for the secret-keeping ability of active participants—the results of this model suggest that large conspiracies (≥1000 agents) quickly become untenable and prone to failure. The theory presented here might be useful in counteracting the potentially deleterious consequences of bogus and anti-science narratives, and examining the hypothetical conditions under which sustainable conspiracy might be possible.

So I finally got around to reading this recent study attempting to discredit the general viability of conspiratorial beliefs. It estimates the likelihood of failure for a conspiracy, over time, primarily given the number of people involved. This is done by examining ‘real’ conspiracies which failed to be kept secret, and have been widely accepted as factual by the general public.

To calibrate their metrics, the author chose three ‘real conspiracies’: the NSA PRISM program, the Tuskegee experiments, and the FBI forensic scandal. The author then applied the results to the generalized theories of moon landing fraud, climate-change fraud, vaccine-risk fraud, and suppressed cancer cures. Maths aside, there are fundamental problems with the required premises.

Non-Falsifiable Argument: “We can’t prove it because the conspiracy is working.”

There is direct and immediate impact with the catch-22-wall of a successful conspiracy’s quality of non-falsifiability. People complain the most extreme conspiracy theorists explain away problems in their theories by claiming the conspiracy goes ever deeper. Such minds can always allege centralized (or organic) control over more elements of a situation, to the point where it is impossible to disprove the theory (non-falsifiable).

There is a required assumption that models based on ‘real’, failed conspiracies are a viable metric to apply to ‘alleged’, still secret, successful conspiracies. “There is also an open question of whether using exposed conspiracies to estimate parameters might itself introduce bias and produce overly high estimates of p—this may be the case, but given the highly conservative estimates employed for other parameters, it is more likely that p for most conspiracies will be much higher than our estimate…” The author believes other assumptions are conservative enough to balance this impasse.

The author does make the obligatory gesture towards balance in disclaiming: “While the modern usage of conspiracy theory is often derogatory (pertaining to an exceptionally paranoid and ill-founded world-view) the definition we will use does not a priori dismiss all such theories as inherently false.“

“The estimates also make the assumption that all agents in the estimate are considered to have knowledge of the conspiracy at hand;”

The most comically glaring oversight is the lack of the word, or concept of, ‘compartmentalization’. By definition, compartmentalization exponentially reduces the number of people who have the information [and courage] to reveal the conspiracy. We humans tend to underestimate the exponential effect in all fields of study.

When it comes to the strategy of compartmentalization, I suppose the reader (and/or author) just didn’t need to know.

From Wikipedia’s article on Compartmentalization (information security):

In matters concerning information security, whether public or private sector, compartmentalization is the limiting of access to information to persons or other entities who need to know it in order to perform certain tasks.

The concept originated in the handling of classified information in military and intelligence applications…

The basis for compartmentalization is the idea that, if fewer people know the details of a mission or task, the risk or likelihood that such information will be compromised or fall into the hands of the opposition is decreased.

…

An example of compartmentalization was the Manhattan Project. Personnel at Oak Ridge constructed and operated centrifuges to isolate Uranium-235 from naturally occurring uranium, but most did not know what, exactly, they were doing. Those that did know, did not know why they were doing it. Parts of the weapon were separately designed by teams who did not know how the parts interacted.

The Manhattan Project was not included in the study’s data set either, but was completed with 130,000 people and $2B (or $26B in 2016) successfully kept secret for at least four years. This project has the same order of magnitude as the moon-landing participant estimates, and was successfully kept secret from the public until the intended reveal, longer than period predicted in this study.

In these secretive areas, how much do workers know what they are doing? Are they confident that what they are doing is for the greater good? Do the majority of conspirators in a well-laid plan even realize they are conspiring?

Did “taking out Osama bin Laden” require fewer than 1,000 people?

Given the strategy of compartmentalization, I can’t think of many alleged large ‘conspiracy theories’ which might actually require more than 100 people to have enough knowledge of a plan to be a golden bullet whistleblower. And for that matter, how many plans actually include any single task that requires more than 100 people to understand in order to accomplish?

If large or significant conspiracies are too big not to fail (as this study claims), then please, let’s at least call for an end to all covert operations within the CIA, NSA, FBI, etc… and their private contractors we pay for.

The job of covert agents is to successfully conspire against ‘those who threaten this country’? Are conspiracies only effective against ‘the bad guys’? I think I have a viable belief that these agencies do find significant value in conspiring and that it tends to work (for someone involved), or they might have disbanded themselves already.

It is plausible that after a given conspiracy is complete, some unwitting members of some compartments would then realize what they had been a part of. But they would also realize that there had been tiers of control they had previously been unaware of. Especially if the conspiracy involved murder or mass-murder, you can bet they should fear for their own lives, should they speak up.

When It Comes To Science…

This study’s emphasis on conspiracy theories as related to scientific issues warrants a separate counterbalance. Is the author correct that “the vast majority of scientists in a field would have to mutually conspire”? Is enough scientific research even replicated by the broader community to require their complicity in ‘facts’ which have been ‘established’?

Technical jargon / terminology as a form of compartmentalization

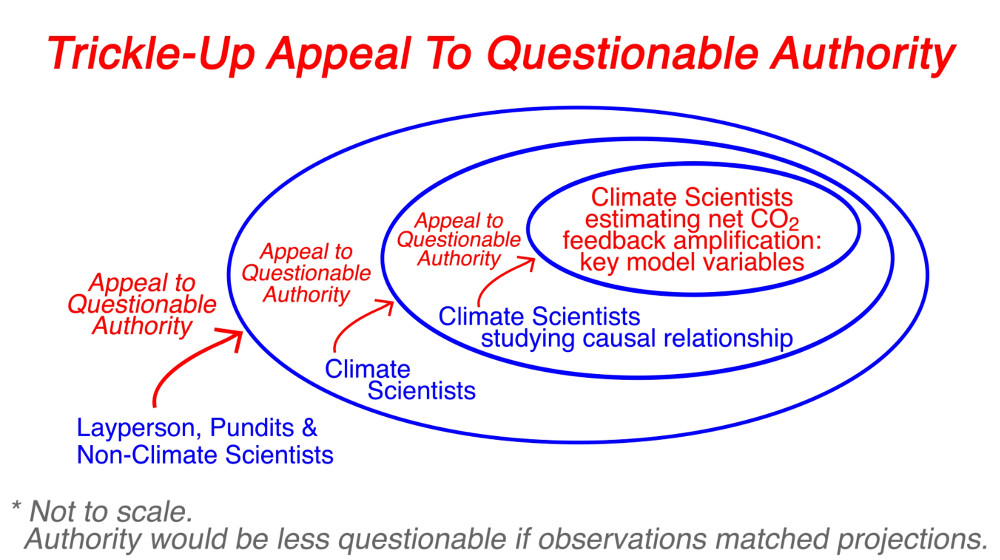

With the increasing complexity of the human knowledge-base, every discipline organically becomes increasingly compartmentalized due to its required specificity. This means the vast majority of scientists cannot be considered an authority on the work done by the vast majority of other scientists, and scholarly critique (checks and balances) must also be largely compartmentalized.

Beyond the glaring omission of compartmentalization, this study also fails to acknowledge the vital role of groupthink, required for larger, scientific, and/or open conspiracies to succeed.

I do not endorse attacks on the scientific method itself. By definition, the method evolves and updates the science. Scientific discoveries can be “the most correct” of their time, but they are not fully correct. Just as in politics, backward steps happen, and dissenters should be embraced and engaged. Recent studies have found a whopping 2/3 of past peer-reviewed studies to be wrong when attempting to replicate them.

It is inconsistent to claim that some scientists or consensus positions should be immune to — or vaccinated against — skepticism. Of course, some people have better and/or purer motives than others, or see their actions as means to better ends, but the scientific community is simply not immune to corruption.

Most areas of scientific research directly impact the money and/or power of some groups or interests. As a consequence, money and power are often used with significant influence on the scientific discussion, especially to claim a coveted ‘consensus’. In some cases, money might be the most important motive. In other cases, power might be a higher priority.

Before tobacco was widely accepted as deadly, did big tobacco successfully conspire to manufacture a scientific consensus that it was relatively safe? Absolutely. How many years or decades was this information successfully ‘kept secret’? Was every doctor who didn’t worry about their patients using tobacco ‘part of’ or ‘in on’ the conspiracy? Or were the vast majority just well intending victims of groupthink?

Most agree that big oil interests have conspired to attack the scientific consensus on many issues. Does the conspiring of big oil interests require far fewer than 1,000 knowing agents, by the author’s metrics? Why should it be inconceivable for other interests to have conspired to support the scientific consensus, with their efficacy adjusted to their scale of money and power?

Can we simultaneously argue that big oil is capable of conspiring to change scientific consensus in a dangerous way… while arguing that vaccine manufacturers are not capable of such influence in their related fields (adjusted to the scale of their budgets)?

Remember, scientific history is also written by the victors.

One of the things which came out of “Climategate” was that the University of East Anglia researcher Phil Jones was intentionally trying not share their climate data. Nature later reported that they “broke the law when dealing with requests for climate data, according to the UK Information Commissioner’s Office.”

Withholding important information from the scientific community (and the public) increases the power/leverage of their own data analysis. It also stifles potential for the checks and balances, which the author assumes always exist.

This should qualify as a ‘real’ ‘conspiracy’ taking place within a high echelon of the climate science establishment. I also might cite this as an example of compartmentalization in [presumably] non-military science. Phil Jones was most likely just trying to save the planet, and perhaps saw it as benevolent conspiracy. But I guess we did not need to know.

The study estimates that a climate-change fraud/hoax would have collapsed in 3.70 years… if members of all scientific bodies are included in the conspiracy. The estimate, if only the scientists are in on the conspiracy, is 26.77 years. Of course, non-scientists have no authority to blow the whistle on bad science, so I am unsure why the first estimate has any serious value.

But I would take this further, and say that only the subset of scientists studying the net-amplification after the climate systems’ and sub-systems’ countless feedback loops (after man-made greenhouse gases are added) have any authority to really blow the whistle on the alarming consensus climate models.

My essays on climate change:

SolarNotBombs.org

Either way, it has indeed been 27 years since the first major climate change models. From the skeptic’s perspective, there have indeed been countless climate scientists ‘defecting’ over the past 5-10 years… significantly earlier than predicted in this study. They don’t usually use the words ‘hoax’ or ‘conspiracy’ because the much larger factors in this case are simply groupthink and good intentions. They are also scientists, and those terms lack the required nuance. So maybe the author’s math isn’t that bad, perhaps he’s just not listening to the whistleblowers for the ‘unreasonable’ conspiracy theories.

There’s one more superb irony worth mentioning. The Catastrophic Anthropogenic Global Warming Models’ Theory, especially when generally re-framed as ‘Climate Change’, is totally not falsifiable. Whenever predictions of man-made warming fail, the heat can always be allegedly hiding within another known unknown sub-system.

Over-claiming consensus certainty, attacking straw man positions, or silencing evidence-based views on scientific issues… is anti-science.

We all come to new information with bias, but let’s search for more consistency in our response and approach. I might have enjoyed a more balanced attempt at this study. It would have been better if they provided estimates which somehow factored in compartmentalization and groupthink to compare with the published estimates, and used more than three examples to establish the model and metrics.

But to my friends on the flip side, this article is unlikely to convince anyone who has found compelling evidence that some of the ‘alleged’ conspiracies (the ‘theories’) are at least worthy of investigation. <3

The fine print admits some conspiracies theories are likely true, but the headline and larger message are intended to generally discredit their study. Unfortunately, this study is almost worthless to an intelligent discussion of these sensitive and critically important issues.

People should be encouraged to study anything and everything.

Compartmentalization Thought Experiment: 55

For the sake of argument, let’s say that five people can effectively keep a secret (and a cover story). This is perhaps especially true if their life depended on keeping those secrets (or perhaps their whole family’s lives), by implicit or direct risk and/or threat.

Let’s say these five core conspirators (with full knowledge of a given plan) each directly control — or strongly influence — five unwitting accomplices. The next compartments below those five, contain 25 unwitting accomplices who might think they are only working with the one participant on one project or task. And now we already have 150 people working unwittingly towards a plan of which only 5 people really understand, by definition of the strategy of compartmentalization.

So this study requires some significant translation. In this hypothetical, three tiers of compartmentalization scale the number of knowing participants from 150 to just 5. Perhaps there is some decent behavioral science on our ability to keep secrets. An established metric (instead of just 5) could use the number of all involved participants to estimate how many tiers of compartmentalization would be required to accomplish a given alternative theory.

In such a compartmentalized hierarchy, adding a fourth tier could then accomplish five separate tasks requiring more than 100 people, for a total of 775 partially unwitting participants. A fifth tier could accomplish 25 tasks requiring more than 100 people.

It takes only eight tiers to involve over 400,000 people, the order of magnitude estimated for the moon landing project. Higher-level omissions and/or deceptions regarding the larger goals of a conspiracy, likely result in vast majority of participants believing their work is for the cover stories.

In this hypothetical, with compartmentalization factored in, one would need the first four tiers (and elements of the fifth) to all be “in the know” in order to meet the study’s definition of a ‘large conspiracy’ as having greater than 1,000 knowing agents. Again, having more than a couple tiers really “in the know” defies the definition of compartmentalization, which is a strategy of effective conspiracies like the Manhattan Project.